If you are not familiar with the oc command, refer to OpenShift - Getting Started with the oc command.

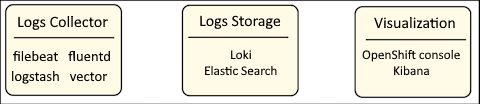

Logging in OpenShift is separated into different systems and services that serve a specific purpose.

- Collecting log data - typically done with filebeat, fluentd, logstash or vector

- Storing log data, for example, in an Amazon Web Services (AWS) S3 Bucket - typically done with Loki or Elastic Search

- Visualizing and query log data - typically done in the OpenShift console or in Kibana

There are abbreviations used as shorthand for the collection of systems and services to collect, store and visualize log data.

- EFK (Elastic Search, Fluentd, Kibana)

- ELK (Elastic Search, Logstash, Kibana)

- EVK (Elastic Search, Vector, Kibana)

- LFK (Loki, Fluentd, Kibana)

- LLK (Loki, Logstash, Kibana)

- LVK (Loki, Vector, Kibana)

The first step in configuring OpenShift to collect log data from various different types of resources in your OpenShift cluster, such as nodes, pods, and so on is to install an Operator that will collect the log data. This is often done by installing the Cluster Logging Operator. The oc get operators command can be used to display the Operators you have installed.

~]$ oc get operators

NAME AGE

cluster-logging.openshift-logging 2y13d

Next you will probably install an Operator that will be used to store the log data such as the Elastic Search Operator or the Loki Operator, and then the Operator could be configured to storage objects in some sort of object storage, such as an Amazon Web Services (AWS) S3 Bucket.

The oc get operators command can be used to display the Operators you have installed.

~]$ oc get operators

NAME AGE

cluster-logging.openshift-logging 2y13d

elasticsearch-operator.openshift-operators-redhat 2y13d

loki-operator.openshift-operators-redhat 320d

The Cluster Logging Custom Resource (CR) is used to define the system that will collect log data (vector in this example), where the log data will be stored (Loki in this example), and where the log data can be visualized and queried (the OpenShift console in this example).

apiVersion: logging.openshift.io/v1

kind: ClusterLogging

metadata:

name: instance

namespace: openshift-logging

spec:

collection:

type: vector

logStore:

lokistack:

name: logging-loki

type: lokistack

visualization:

type: ocp-console

ocpConsole:

logsLimit: 15

managementState: Managed

Did you find this article helpful?

If so, consider buying me a coffee over at